The UK is trialling its Covid-19 contact tracing application which tracks human interactions. The app uses Bluetooth Low Energy (BLE) communications between smartphones for registering handshakes’ duration and distance. This data is then uploaded to a centralised database so that if a user self-registers as Covid-19 positive, the centralised service can push notifications to all ‘contacts’. This is a highly centralised model based around relaying all users ‘Contact Events’ together with user self-assessments of Covid-19 symptoms.

A user can self-report having the symptoms of Coronavirus. They cannot report a positive test for Coronavirus as there is no way of entering either an NHS_ID or a Test_ID. Technically the UK mobile app does not match the mobile App ID against the user’s NHS ID and there is no mapping between the app and NHS England’s Epic system. This approach allows for greater anonymity as the centralised database will not be recording a user’s NHS identifier. The downside of this approach will be a higher percentage of false positives and contact notifications .

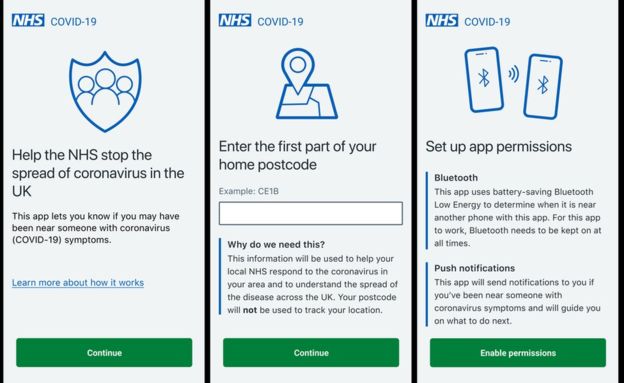

NHSX and Pivotal, the software development firm, have published the App’s source code and the App’s Data Protection Impact Assessment. The latter is a mandatory document within the EU-GDPR framework. The user provides ‘Consent’ as the legal basis for data processing of the first three characters on their postcode and the enabling of permissions. The NHS Covid-19 app captures the first part of the user’s postcode as personal data. It then requests permissions for Bluetooth connectivity necessary for handshakes and Push notifications necessary for file transfer.

The UK NHS app captures ‘Contact Events’ between enabled devices using Bluetooth. The app records and uploads Bluetooth Low Energy handshakes based on the Bluetooth Received Signal Strength Indication (RSSI) measure for determining proximity. Not all RSSI values are the same as chip manufacturers and firmware are different. The RSSI value differs between different radio circuits. Two different models of iPhones will have similar internal bluetooth components whereas on Android devices there will be a large variation of devices and chipsets. For Android devices it will be harder to absolutely measure a consistent RSSI across millions of handshakes. A Covid-19 proximity virus transfer predictor should take into account the variances between BLE chipsets.

All of the ‘Contact Events’ are stored in the centralised NHSX database. This datastore will most likely hold simple document records for each event, its duration, the average proximity, the postcode (first three characters) only where that occurred and which devices were involved. It will then run queries against that database whenever a user self-registers their Covid-19 symptoms. The centralised server will push notification messages to all registered app users returned in that query. The logic in the server will most likely take a positive / inclusive approach to notification so that anybody within a 2 metre RSSI range for more than 1 second of a person with Covid-19 symptoms will be notified.

All EU countries must comply with EU-GDPR and all are currently launching their Covid-19 tracking applications. These applications because they require user downloading can only use ‘Consent’ as the legal basis for data capture and require a register of the user’s consent. The user must also be able to revoke ‘Consent’ through the simple step of deleting the app on their device. A more pertinent challenge is within a corporate or public work environment where there can be a ‘Legitimate Interest’ legal basis for capturing user’s symptoms. For example a care home could have legitimate interest in knowing the Covid-19 symptoms of its employees. It is likely we may see the growth in the use of private apps encouraged by employers if the national centralised government apps do not reach a critical mass. Either way we live in a smartphone world and bluetooth’s ubiquity is now certain.