Federating Data Between Snowflake and Databricks with DuckDB and Apache Iceberg

If you’re running both Snowflake and Databricks — and most enterprises I work with are — you’ve probably hit the federation problem. Data lives in both platforms, analysts need to query across them, and the obvious solutions (ETL everything into one place, or pay for both platforms’ compute to cross-query) are either slow or expensive.

There’s a cleaner pattern emerging: use Apache Iceberg as the open table format that both platforms write to, and DuckDB as a lightweight engine that reads across both — directly from object storage, without touching either platform’s compute.

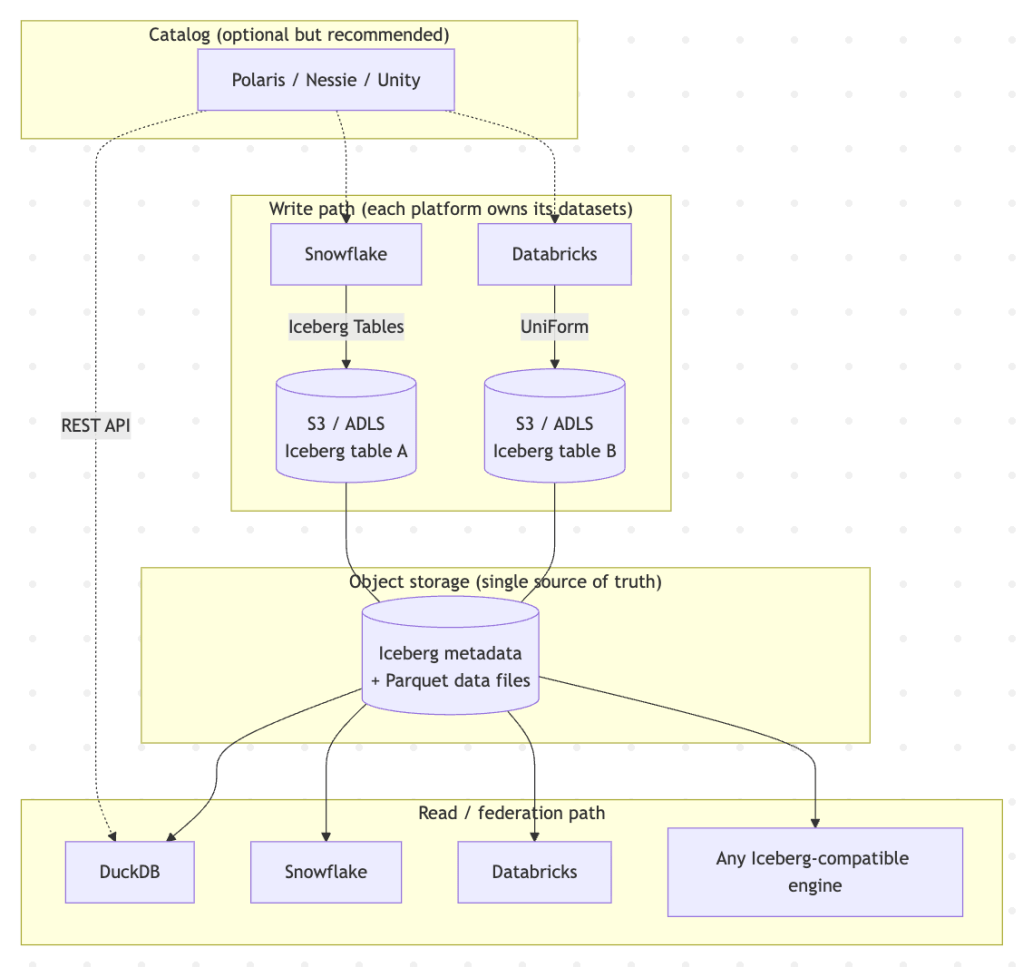

The architecture

The key insight is that Iceberg separates the storage format from the compute engine. Both Snowflake and Databricks write Iceberg-compatible tables to your object storage account. The data files are Parquet, the metadata is JSON/Avro, and any engine that understands the Iceberg spec can read them. DuckDB is one such engine — and it happens to be fast, free, and runs anywhere from a laptop to a container.

How each platform writes Iceberg

Snowflake has native Iceberg Tables. You create an external volume pointing to your storage, then create tables that Snowflake manages in Iceberg format:

-- Snowflake: create an Iceberg table backed by your storageCREATE OR REPLACE ICEBERG TABLE customer_segments CATALOG = 'SNOWFLAKE' EXTERNAL_VOLUME = 'my_s3_volume' BASE_LOCATION = 'warehouse/customer_segments/'AS SELECT * FROM analytics.customer_segments;

Snowflake writes standard Iceberg metadata and Parquet data files to S3/ADLS. Any Iceberg-compatible reader can access them.

Databricks uses Delta Lake as its native format but publishes Iceberg-compatible metadata via UniForm. When enabled, Databricks generates Iceberg metadata alongside the Delta transaction log:

-- Databricks: enable UniForm Iceberg on a Delta tableALTER TABLE risk_scoresSET TBLPROPERTIES ( 'delta.universalFormat.enabledFormats' = 'iceberg');

The underlying data files are shared — UniForm doesn’t duplicate them, it just generates an additional metadata layer that Iceberg readers can follow.

DuckDB as the federation engine

With both platforms writing Iceberg to the same storage layer, DuckDB reads across them using the iceberg extension:

-- Install extensions (one-time)INSTALL iceberg;INSTALL httpfs;LOAD iceberg;LOAD httpfs;-- Configure storage credentialsSET s3_region = 'eu-west-2';SET s3_access_key_id = 'AKIA...';SET s3_secret_access_key = '...';-- Query a Snowflake-written Iceberg tableSELECT segment, count(*) as customersFROM iceberg_scan( 's3://my-bucket/warehouse/customer_segments/metadata/v1.metadata.json')GROUP BY segment;-- Query a Databricks-written table (via UniForm)SELECT customer_id, risk_scoreFROM iceberg_scan( 's3://my-bucket/warehouse/risk_scores/metadata/v1.metadata.json');-- The real power: join across both platformsSELECT cs.segment, avg(rs.risk_score) as avg_risk, count(*) as nFROM iceberg_scan('s3://...customer_segments/metadata/...') csJOIN iceberg_scan('s3://...risk_scores/metadata/...') rs ON cs.customer_id = rs.customer_idGROUP BY cs.segmentORDER BY avg_risk DESC;

No data movement. No Snowflake or Databricks compute charges for the cross-platform query. DuckDB pulls the Parquet files it needs directly from storage and joins them locally.

Making it practical: the catalog question

The example above hardcodes metadata file paths, which gets tedious. In production, you want a catalog — a registry that maps table names to their Iceberg metadata locations.

There are three options emerging:

Apache Polaris (formerly Snowflake’s Polaris Catalog, now open-source) provides a REST catalog that both Snowflake and Databricks can register tables with. DuckDB’s Iceberg extension is adding REST catalog support, which would let you write SELECT * FROM catalog.schema.table instead of pointing at S3 paths.

Nessie is a Git-like catalog for Iceberg tables — it gives you branching and tagging of table state, which is useful for testing schema changes or running what-if analyses across both platforms’ data.

Databricks Unity Catalog can expose Iceberg-compatible metadata via its REST API, though it’s more tightly coupled to the Databricks ecosystem.

For most setups, Polaris is the pragmatic choice — it’s vendor-neutral, both platforms support it, and the DuckDB integration is on the roadmap.

Where DuckDB fits (and where it doesn’t)

DuckDB is ideal for:

- Ad-hoc cross-platform queries — an analyst needs to join a Snowflake marketing table with a Databricks ML scoring table, now, without filing a ticket.

- Data validation and testing — check that what Snowflake wrote matches what Databricks expects, without spinning up either platform.

- Lightweight APIs and microservices — embed DuckDB in a Python service that queries Iceberg tables on demand.

- Local development — work with production table schemas and sample data on a laptop.

- CI/CD pipelines — validate data quality in GitHub Actions without cloud compute costs.

DuckDB is not a replacement for either platform’s distributed compute. For a 10TB aggregation, use Snowflake or Databricks. For joining a few million rows across platforms to answer a question, DuckDB is faster to set up, free to run, and avoids the “which platform do we move the data to?” conversation entirely.

Limitations to know about

DuckDB’s Iceberg support is read-only. It won’t write Iceberg tables back. The write path stays with Snowflake and Databricks.

UniForm metadata generation is asynchronous. There can be a slight lag (seconds to minutes) before DuckDB sees the latest Databricks data via the Iceberg metadata layer.

Not all Iceberg features are supported. DuckDB reads Iceberg v1 and v2 but doesn’t support all advanced features like merge-on-read or certain partition evolution scenarios. For most analytical tables this isn’t an issue.

Schema evolution needs coordination. If Snowflake evolves a table’s schema, the Iceberg metadata reflects this — but you need to be aware of it on the DuckDB side, particularly for joins with Databricks tables that may have a different schema cadence.

The bigger picture

This pattern — open table format as the interoperability layer, lightweight engines for federation — is where the modern data stack is heading. Iceberg (and to some extent Delta Lake via UniForm) decouples data from compute. DuckDB, Trino, Spark, Snowflake, Databricks, and increasingly other engines all read the same files. The lock-in shifts from “your data is trapped in our platform” to “our compute is faster for your workload” — which is a healthier competitive dynamic.

For organisations running both Snowflake and Databricks, the immediate action is straightforward: start writing Iceberg where you can, enable UniForm on Delta tables, and use DuckDB for the cross-platform queries that would otherwise require expensive data movement or duplicate pipelines.